B&W Photos in the Digital Age: Are Monochrome Images Made with Software Truly B&W?

© Seth Shostak

Mark was a fellow radio astronomer, full of insights. One day in a conversation about photography he said something that caused my eyebrows to lift: “Well, as we all know, color film is never sharp.”

We all know? Really? I had never hesitated to load my cameras with Ektachrome or any other chrome fearing that the results would somehow be murky.

But what Mark was getting at was this: As the consequence of a few million years of Darwinian evolution, our eyes have three types of color receptors, casually described as red, green, and blue. That’s better than your dog, who only has two. (But don’t feel superior. Pigeons have five.) For a film manufacturer, realistic color requires that the emulsion have three different, stacked color layers.

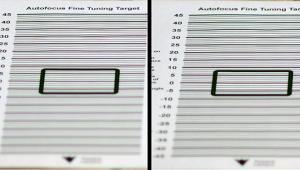

Making stratified emulsions was a technical triumph that took years to get right, but the layer cake architecture of color film inevitably led to slight misfocus as well as scattered light. Unlike black and white, color emulsions were, indeed, somewhat less sharp.

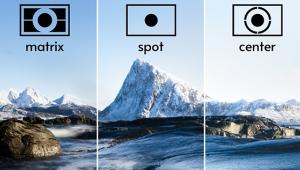

Enter digital cameras. Most of them don’t have sensors with layers like film. Instead, the red, green, and blue receptors are arrayed beside one another, usually in a pattern first developed in the 1970s by Bryce Bayer, a Kodak employee. The sensor is monochrome, but is fronted by a filter that allows only a single color to fall on each sensor pixel. If you look at the tiling of these colors, you’ll note that 50 percent of them are green. The other half is split between red and blue.

Why so much emphasis on the verdure? Because human vision is most sensitive to green, thanks to the arboreal habits of our predecessors. Most of the visual detail you see is because of your eye’s response to green light.

In any event, the software in your camera reads out each color receptor group, or RGB channel, in this Bayer mosaic, and puts together an image that gives a nice approximation to what you thought you saw in front of your lens.

But what if you want black and white? What if you want the sculpted contours of a Yousuf Karsh portrait, or the eerie, flat monochrome of a 1950s sci-fi film?

Not a problem. Your image processing software will happily (or at least easily) combine the components of your photo to make a single luminance image. With little more than mouse clicks, you can desaturate any color image, turning it into black and white. If you’re a Photoshop user, you can use Image>Adjustments>Black & White, and entertain yourself with the sliders.

But is this accurate black and white? Does it correctly represent the intensities of the scene?

First ask yourself, “Do I care?” In most cases you won’t, unless you’re doing some specialized technical work. But even if you do, the software can arrange for the correct weighting of the three color channels (roughly 29 percent red, 63 percent green, 8 percent blue) to mimic the response of your retina.

The more interesting thing may be to deliberately skew the color brew. For example, turn down the blue, which will darken daytime skies, allowing clouds to stand out, since they’re as bright in the red and green as they are in the blue.

Of course, that’s pretty much exactly what you’d do with black-and-white film using a yellow or red filter. But now you can imitate the effects of just about any filter, including some you might never have mail ordered. Dial up the green to make skin tones pop. Or experiment with a purple filter to subdue foliage.

Without doubt, digital imagery, with its easy manipulation of the individual color components of your picture, offers creative control that monochrome processes never had. That’s the good news. The bad news is that, because most sensors use a Bayer matrix or a variation thereof, the red and blue components will have less detail than the green. And even the green is derived from only half the receptors on your sensor. Various image artifacts—a euphemism for “defects”—are the consequence.

If black and white is merely an occasional pursuit, this won’t worsen your life. But if you’re one for whom monochrome is a mainstay, there are remedies. You can consider the Leica M Monochrom camera, which doesn’t have that Bayer filter, or one of the Sigma models outfitted with the Foveon X3 sensor. The latter sports the equivalent of stacked receptors similar to color film, but is much thinner—and sharper.

That’s something Mark would appreciate.

Seth Shostak is an astronomer at the SETI Institute who thinks photography is one of humanity’s greatest inventions. His photos have been used in countless magazines and newspapers, and he occasionally tries to impress folks by noting that he built his first darkroom at age 11. You can find him on both Facebook and Twitter.

(Editor’s Note: Technically Speaking is a column by astronomer Seth Shostak that explores and explains the science of photography.)